hyperparameters

hyperparameters — my Raindrop.io articles

A Complete End-to-End Coding Guide to MLflow Experiment Tracking, Hyperparameter Optimization, Model Evaluation, and Live Model Deployment

A Coding Guide to Implement Advanced Hyperparameter Optimization with Optuna using Pruning Multi-Objective Search, Early Stopping, and Deep Visual Analysis

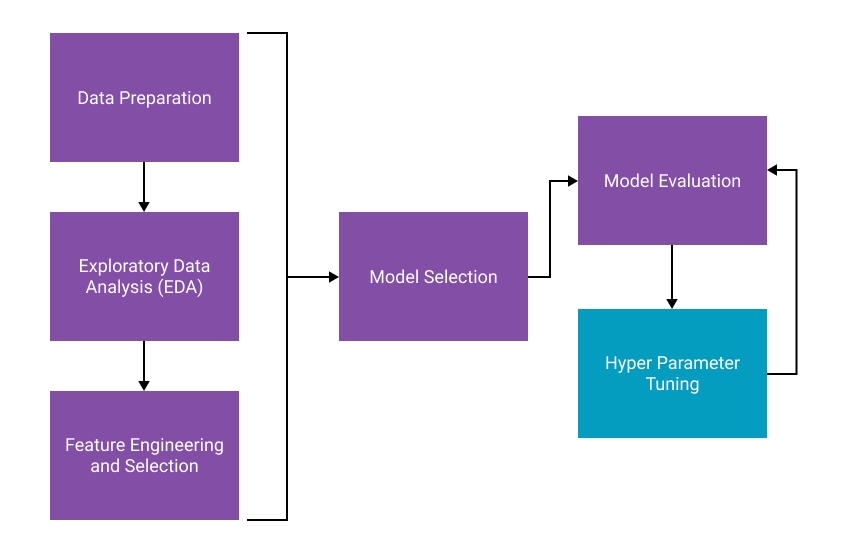

In machine learning, finding the perfect settings for a model to work at its best can be like looking for a needle in a haystack. This process, known as hyperparameter optimization, involves tweaking the settings that govern how the model learns. It's crucial because the right combination can significantly improve a model's accuracy and efficiency. However, this process can be time-consuming and complex, requiring extensive trial and error. Traditionally, researchers and developers have resorted to manual tuning or using grid search and random search methods to find the best hyperparameters. These methods do work to some extent but could be

As computing system become more complex, it is becoming harder for programmers to keep their codes optimized as the hardware gets updated. Autotuners try to alleviate this by hiding as many archite…

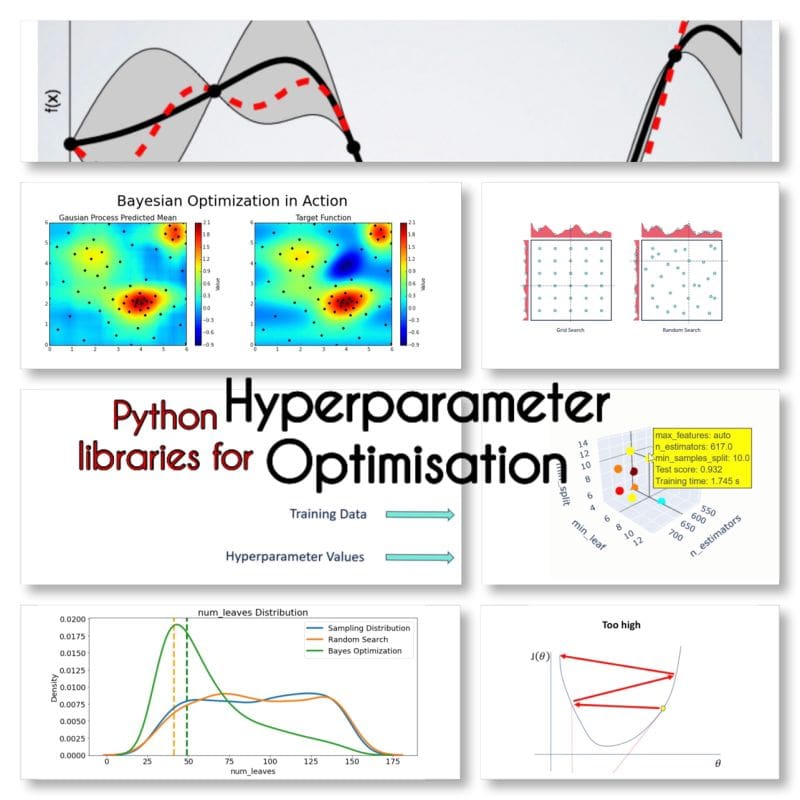

Become familiar with some of the most popular Python libraries available for hyperparameter optimization.

How to optimize the hyperparameters of a machine learning model and how to speed up the process

Easily and efficiently optimize your model’s hyperparameters with Optuna with a mini project

Automate your hyperparameter tuning with Sklearn Pipelines and Hyperopts for multiple models in a single python call. Let's dig into the process...