paperswithcode

paperswithcode — my Raindrop.io articles

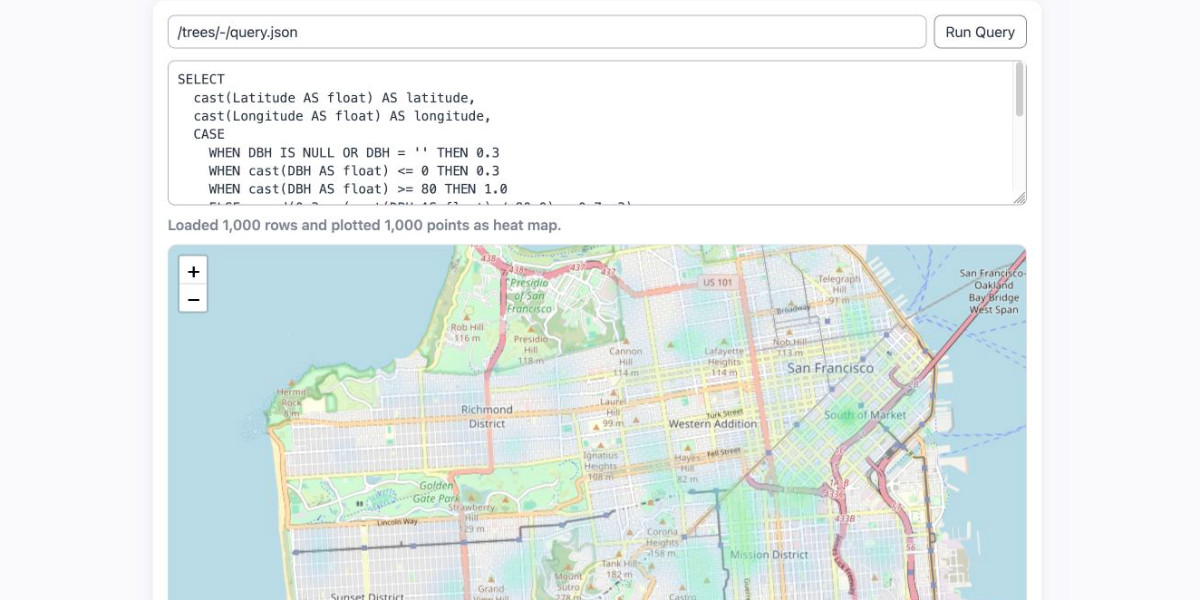

Here's the handout I prepared for my NICAR 2026 workshop "Coding agents for data analysis" - a three hour session aimed at data journalists demonstrating ways that tools like Claude …

In June, I shared a bonus article with my curated and bookmarked research paper lists to the paid subscribers who make this Substack possible.

A curated list of LLM research papers from July–December 2025, organized by reasoning models, inference-time scaling, architectures, training efficiency, and...

Keep up with the latest ML research

Runaway success and underfunding have led to growing pains for the preprint server

Should you use PyTorch vs TensorFlow in 2023? This guide walks through the major pros and cons of PyTorch vs TensorFlow, and how you can pick the right framework.

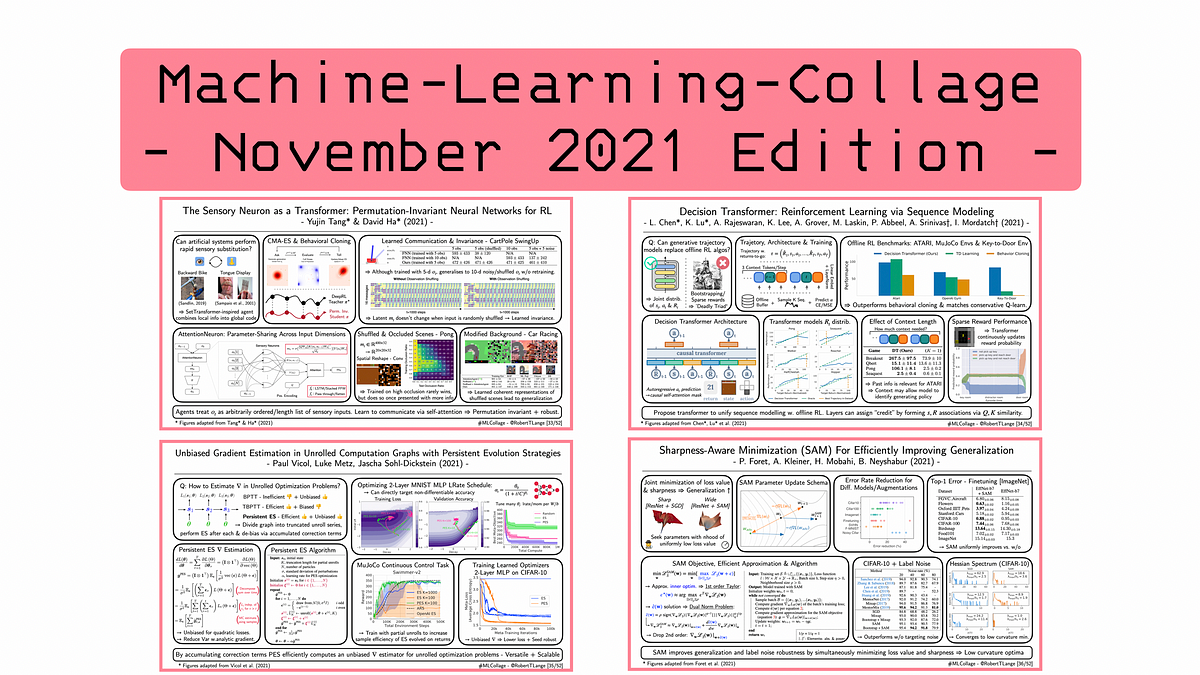

A curated list of the latest breakthroughs in AI (in 2021) by release date with a clear video explanation, link to a more in-depth article, and code. - louisfb01/best_AI_papers_2021

From Sensory Substitution to Decision Transformers, Persistent Evolution Strategies and Sharpness-Aware Minimization

Papers With Code highlights trending Machine Learning research and the code to implement it.

2284 methods • 143838 papers with code.

Papers with Code indexes various machine learning artifacts — papers, code, results — to facilitate discovery and comparison. Using this…

**Representation Learning** is a process in machine learning where algorithms extract meaningful patterns from raw data to create representations that are easier to understand and process. These representations can be designed for interpretability, reveal hidden features, or be used for transfer learning. They are valuable across many fundamental machine learning tasks like [image classification](/task/image-classification) and [retrieval](/task/image-retrieval). Deep neural networks can be considered representation learning models that typically encode information which is projected into a different subspace. These representations are then usually passed on to a linear classifier to, for instance, train a classifier. Representation learning can be divided into: - **Supervised representation learning**: learning representations on task A using annotated data and used to solve task B - **Unsupervised representation learning**: learning representations on a task in an unsupervised way (label-free data). These are then used to address downstream tasks and reducing the need for annotated data when learning news tasks. Powerful models like [GPT](/method/gpt) and [BERT](/method/bert) leverage unsupervised representation learning to tackle language tasks. More recently, [self-supervised learning (SSL)](/task/self-supervised-learning) is one of the main drivers behind unsupervised representation learning in fields like computer vision and NLP. Here are some additional readings to go deeper on the task: - [Representation Learning: A Review and New Perspectives](/paper/representation-learning-a-review-and-new) - Bengio et al. (2012) - [A Few Words on Representation Learning](https://sthalles.github.io/a-few-words-on-representation-learning/) - Thalles Silva ( Image credit: [Visualizing and Understanding Convolutional Networks](https://arxiv.org/pdf/1311.2901.pdf) )

**Image Retrieval** is a fundamental and long-standing computer vision task that involves finding images similar to a provided query from a large database. It's often considered as a form of fine-grained, instance-level classification. Not just integral to image recognition alongside [classification](/task/image-classification) and [detection](/task/image-detection), it also holds substantial business value by helping users discover images aligning with their interests or requirements, guided by visual similarity or other parameters. ( Image credit: [DELF](https://github.com/tensorflow/models/tree/master/research/delf) )

**Denoising** is a task in image processing and computer vision that aims to remove or reduce noise from an image. Noise can be introduced into an image due to various reasons, such as camera sensor limitations, lighting conditions, and compression artifacts. The goal of denoising is to recover the original image, which is considered to be noise-free, from a noisy observation. ( Image credit: [Beyond a Gaussian Denoiser](https://arxiv.org/pdf/1608.03981v1.pdf) )

**Domain Adaptation** is the task of adapting models across domains. This is motivated by the challenge where the test and training datasets fall from different data distributions due to some factor. Domain adaptation aims to build machine learning models that can be generalized into a target domain and dealing with the discrepancy across domain distributions. Further readings: - [A Brief Review of Domain Adaptation](https://paperswithcode.com/paper/a-brief-review-of-domain-adaptation) ( Image credit: [Unsupervised Image-to-Image Translation Networks](https://arxiv.org/pdf/1703.00848v6.pdf) )