regressions

regressions — my Raindrop.io articles

Multicollinearity occurs when predictors in your regression model correlate highly, making it hard to isolate individual variable effects.

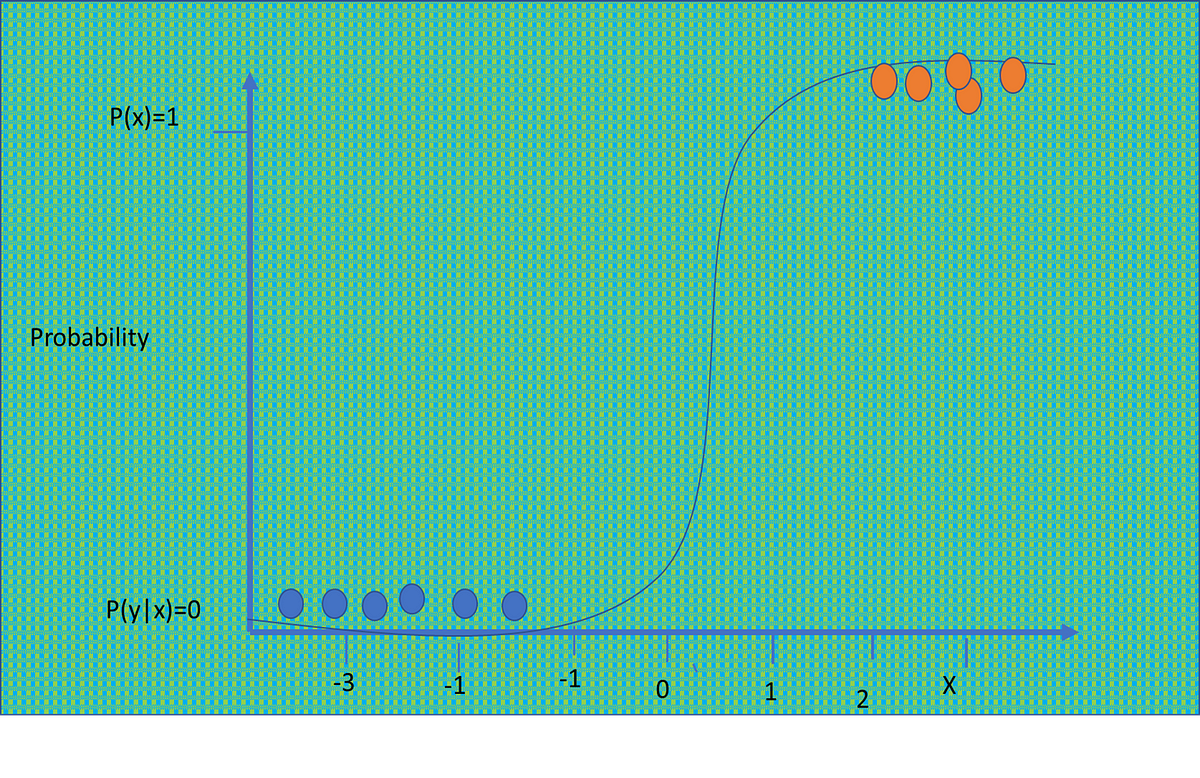

Learn when probit regression outperforms logistic regression for binary outcome modeling.

A standard linear regression assumes the outcome is continuous and normally distributed, which just doesn’t hold up in many of these cases. That’s where GLMs come in.

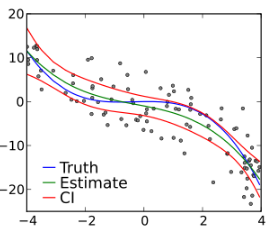

Leverage helps us identify observations that could significantly influence our regression results, even in ways that aren't immediately obvious.

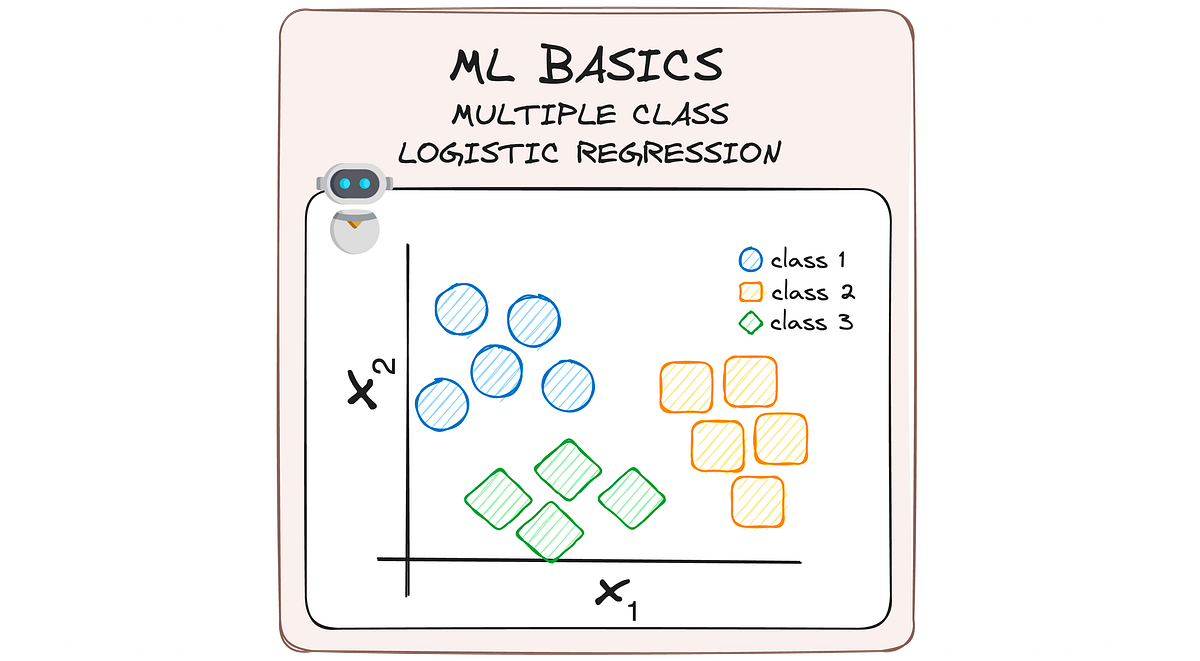

MLBasics #3: From Binary to Multiclass — A Journey Through Logistic Regression Upgrades

An alternative of logistic regression in special conditions

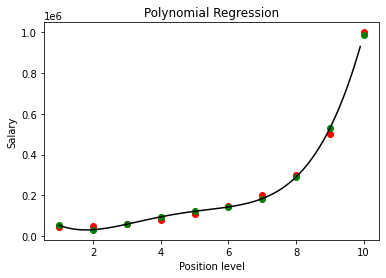

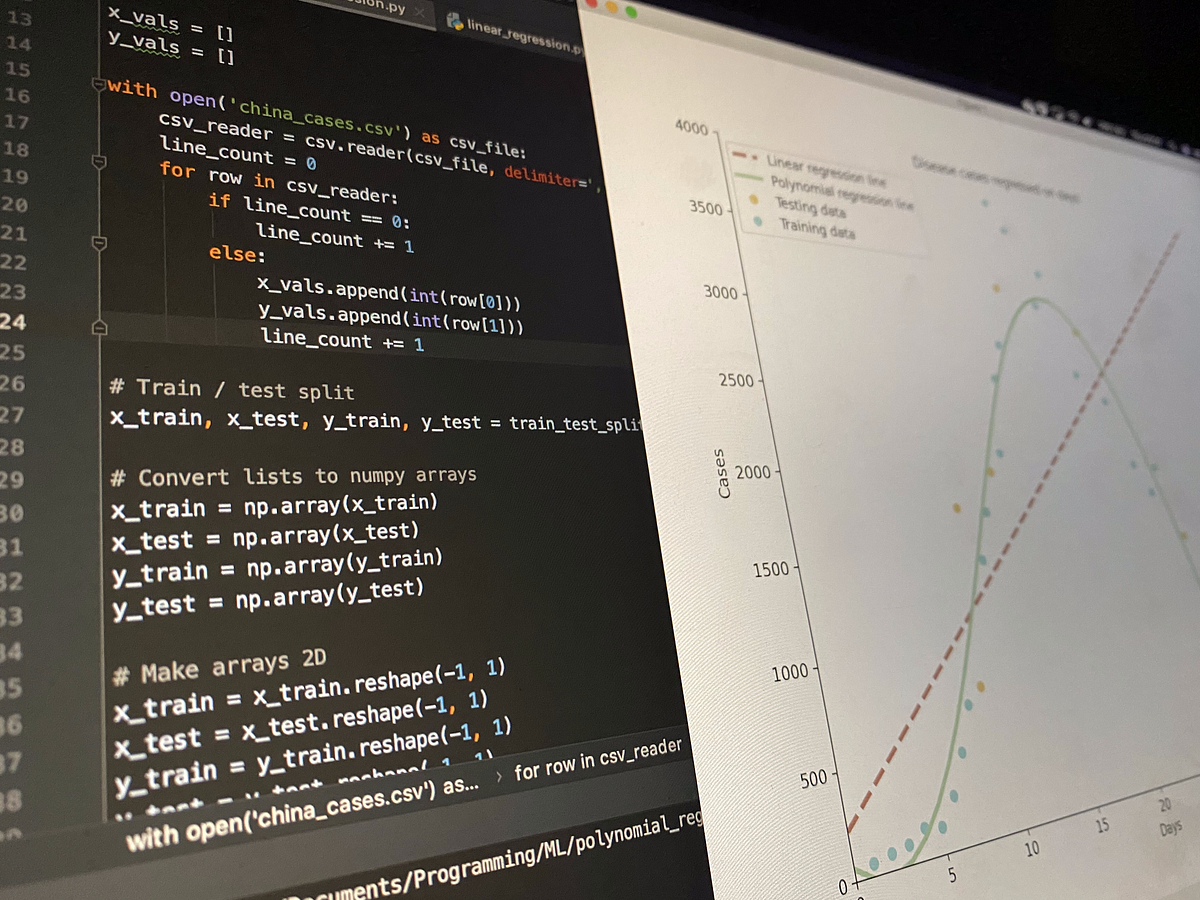

Learn to build a Polynomial Regression model to predict the values for a non-linear dataset.

How do hazards and maximum likelihood estimates predict event rankings?

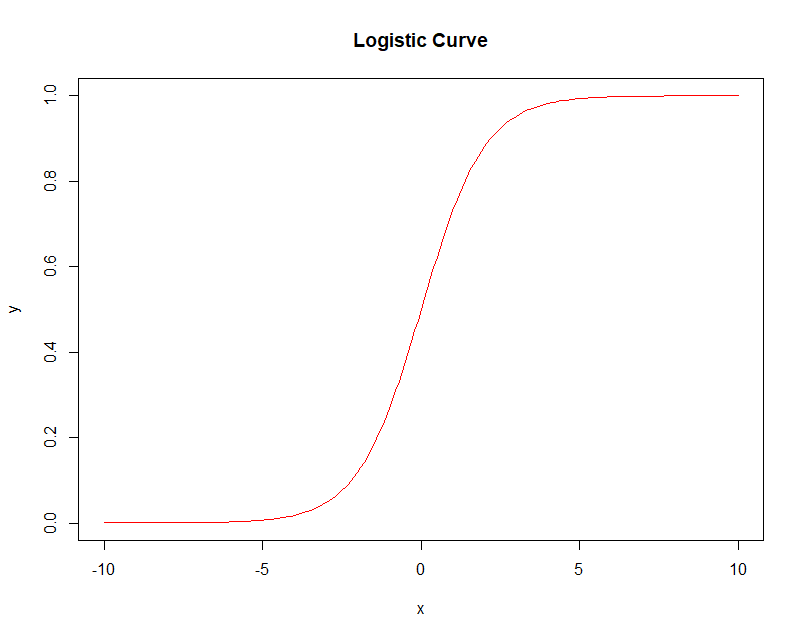

Logistic regression is one of the most frequently used machine learning techniques for classification. However, though seemingly simple…

Statistics in R Series: Deviance, Log-likelihood Ratio, Pseudo R² and AIC/BIC

Who should read this blog: Someone who is new to linear regression. Someone who wants to understand the jargon around Linear Regression Code Repository: https://github.com/DhruvilKarani/Linear-Regression-Experiments Linear regression is generally the first step into anyone’s Data Science journey. When you hear the words Linear and Regression, something like this pops up in your mind: X1, X2,… Read More »Linear Regression Analysis – Part 1

We’ll show how to use the DID model to estimate the effect of hurricanes on house prices

Hands-on tutorial to effectively use different Regression Algorithms

In statistics, principal component regression (PCR) is a regression analysis technique that is based on principal component analysis (PCA). More specifically, PCR is used for estimating the unknown regression coefficients in a standard linear regression model.

Machine Learning from Scratch: Part 4

It is a simple yet very efficient algorithm

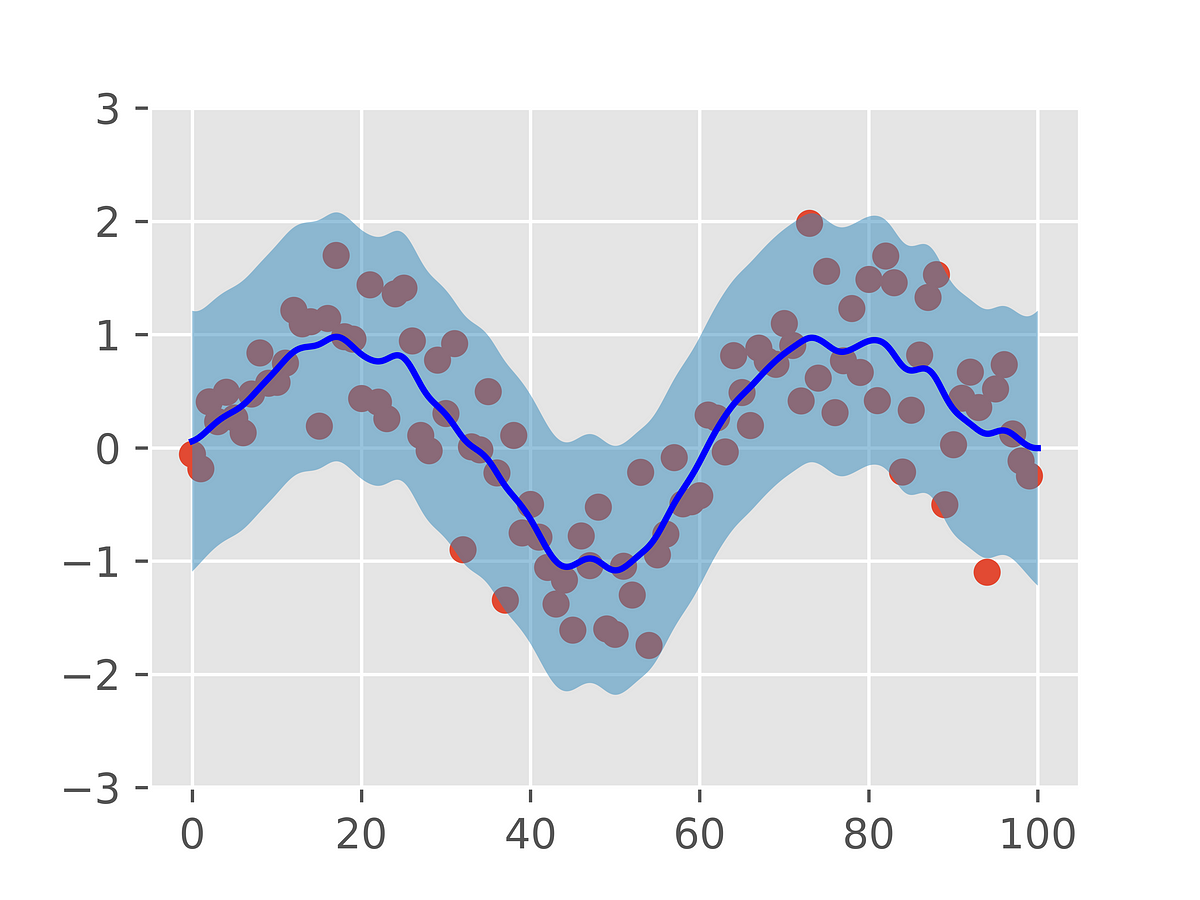

Gaussian Process Regression is a remarkably powerful class of machine learning algorithms. Here, we introduce them from first principles.

Ever wondered how to implement a simple baseline model for multi-class problems ? Here is one example (code included).

A deep-dive into the theory and application behind this Machine Learning algorithm in Python, by a student

Isotonic regression is a method for obtaining a monotonic fit for 1-dimensional data. Let’s say we have data such that . (We assume no ties among the ‘s for simplicity.) Informally, isotonic regression looks for such that the ‘s approximate … Continue reading →

In this article, we show that the issue with polynomial regression is not over-fitting, but numerical precision. Even if done right, numerical precision still remains an insurmountable challenge. We focus here on step-wise polynomial regression, which is supposed to be more stable than the traditional model. In step-wise regression, we estimate one coefficient at a… Read More »Deep Dive into Polynomial Regression and Overfitting

Using Support Vector Machines (SVMs) for Regression

Using residual plots to validate your regression models

Interested in learning the concepts behind Logistic Regression (LogR)? Looking for a concise introduction to LogR? This article is for you. Includes a Python implementation and links to an R script as well.