rnns

rnns — my Raindrop.io articles

Mastercard's Decision Intelligence Pro uses recurrent neural networks to analyze 160 billion yearly transactions in under 50 milliseconds, delivering precise fraud risk scores at 70,000 transactions per second during peak periods.

Deep learning architectures have revolutionized the field of artificial intelligence, offering innovative solutions for complex problems across various domains, including computer vision, natural language processing, speech recognition, and generative models. This article explores some of the most influential deep learning architectures: Convolutional Neural Networks (CNNs), Recurrent Neural Networks (RNNs), Generative Adversarial Networks (GANs), Transformers, and Encoder-Decoder architectures, highlighting their unique features, applications, and how they compare against each other. Convolutional Neural Networks (CNNs) CNNs are specialized deep neural networks for processing data with a grid-like topology, such as images. A CNN automatically detects the important features without any human supervision.

Today AI is the most popular topic in various industries and it's also has different develop purpose....

I explain what is so unique about the RWKV language model.

based on "Hands-On Machine Learning with Scikit-Learn & TensorFlow" (O'Reilly, Aurelien Geron) - bjpcjp/scikit-and-tensorflow-workbooks

Musings of a Computer Scientist.

Algorithms off the convex path.

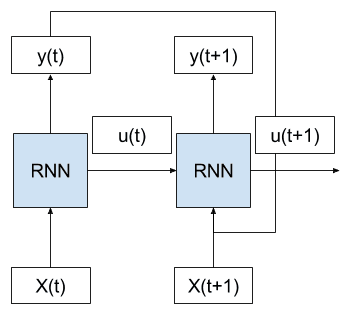

Recurrent neural networks are a type of neural network where the outputs from previous time steps are fed as input to the current time step. This creates a network graph or circuit diagram with cycles, which can make it difficult to understand how information moves through the network. In this post, you will discover the concept of unrolling or unfolding…

Recurrent Neural Network - A curated list of resources dedicated to RNN - kjw0612/awesome-rnn